SPHERES — a testbed for experiments in zero gravity

If you were asked to picture an astronaut, you would probably imagine him in his space suit floating on the border between the blue curve of the earth atmosphere and the darkness of deep space. In practice, sending an astronaut out of his vehicle for an EVA (Extra-Vehicular Activity) is quite costly and hazardous, and many challenges had to be solved before being able to send a human being in such a hostile environment.

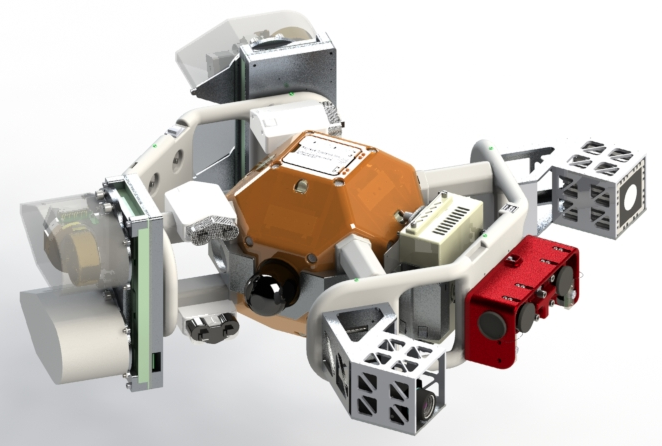

Robots are therefore good candidates to replace some EVAs for external damage inspection and repair — but a major challenge remains to perform safe and reliable navigation in a spatial environment. The SPHERES testbed has been imagined and designed by the MIT Space Systems Lab then sent to the International Space Station in the first decade of this century to allow scientists and astronauts to conduct experiments in real zero-gravity: swarm robotics, vehicle rendezvous and docking, visual navigation, actuation through electromagnetic fields or gyroscopes, fluid mechanics, modular deployment, junior robot competitions, and more.

A new hardware extension to improve visual navigation

Among the challenges induced by visual navigation in space, accurate and reliable sensing is of great importance. In a spatial environment, luminosity is either extremely dark or too bright for common sensors and the landscape includes large patches of uniform color with few visible details. This leads to failure of classical vision algorithms based on corner detection, which require detail and contrast. Another constraint is purely technical: it is difficult to embed a lot of computation power in a small satellite that has to navigate without external help.

To cope with these issues, a new extension for the SPHERES nano-satellites was proposed, called HALO (sent to the ISS at the end of 2016). It can support multiple cameras with different properties to merge their outputs into a more reliable and accurate representation of the external scene. In my thesis, I investigated different algorithms to realize this fusion between a 3D Time-of-Flight (ToF) camera, a stereoscopic camera setup and a thermographic camera.

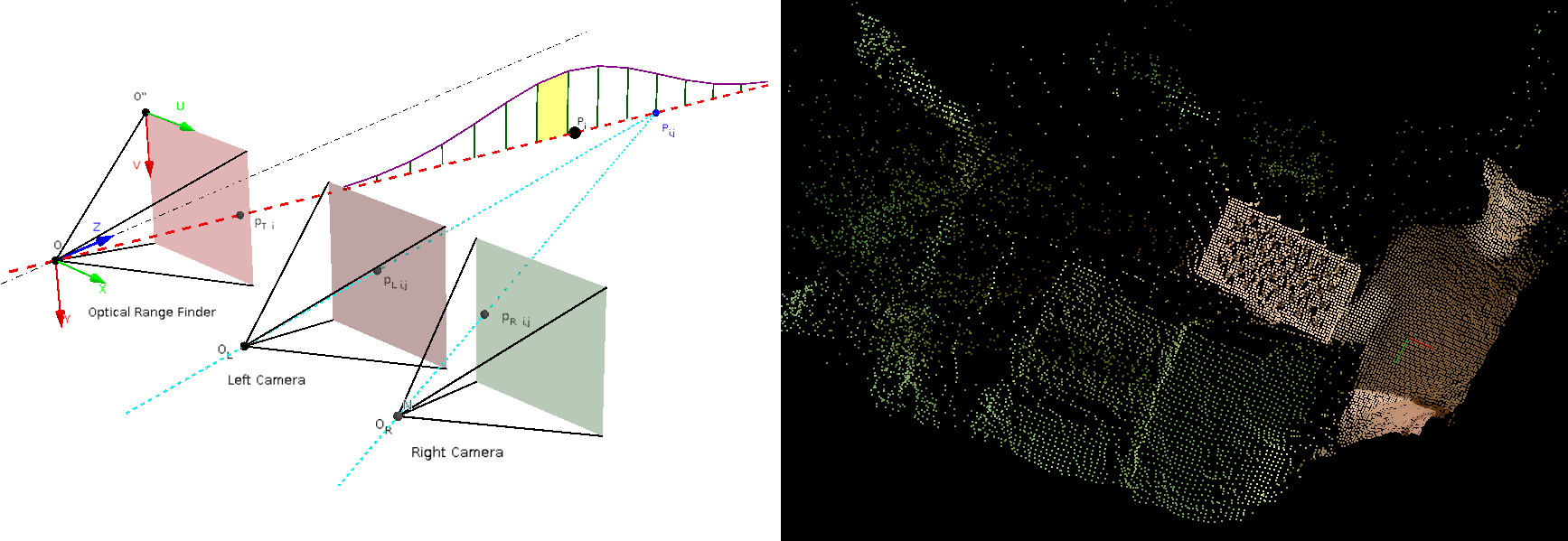

A probabilistic approach to fuse the sensor images

After discussions and bibliographic research, I focused on designing a procedure for automatic calibration of the ToF and the stereoscopic camera together, and merging dense 3D point clouds constructed from their outputs. Those tasks were realized using probabilistic theory and Bayes estimation. Tests were performed with three degrees of freedom on an air-cushion table, then with six degrees of freedom during parabolic flight sessions in a NASA Zero-G plane.

Though the automatic calibration method performed really well, the fusion algorithm failed to show significant improvements. It was concluded that the approach was not totally appropriate to work with such low-accuracy sensors and mechanical setup — but there was no time to implement new ideas before the end of the thesis.

Going further

- Master thesis PDF

- INSPECT paper at the 45th International Conference on Environmental Systems, 2015 — D. Steinberg, T. Sheerin, G. Urbain.

- NASA SPHERES, HALO.