A search problem for sound designers

Sound designers who produce Foley for films, TV and games typically maintain libraries of tens of thousands of recorded sounds — footsteps on gravel, doors slamming, cloth rustling, glass breaking, you name it. The problem is very simple to state: when you need “a door slamming that sounds tense”, how do you find it among 80,000 files?

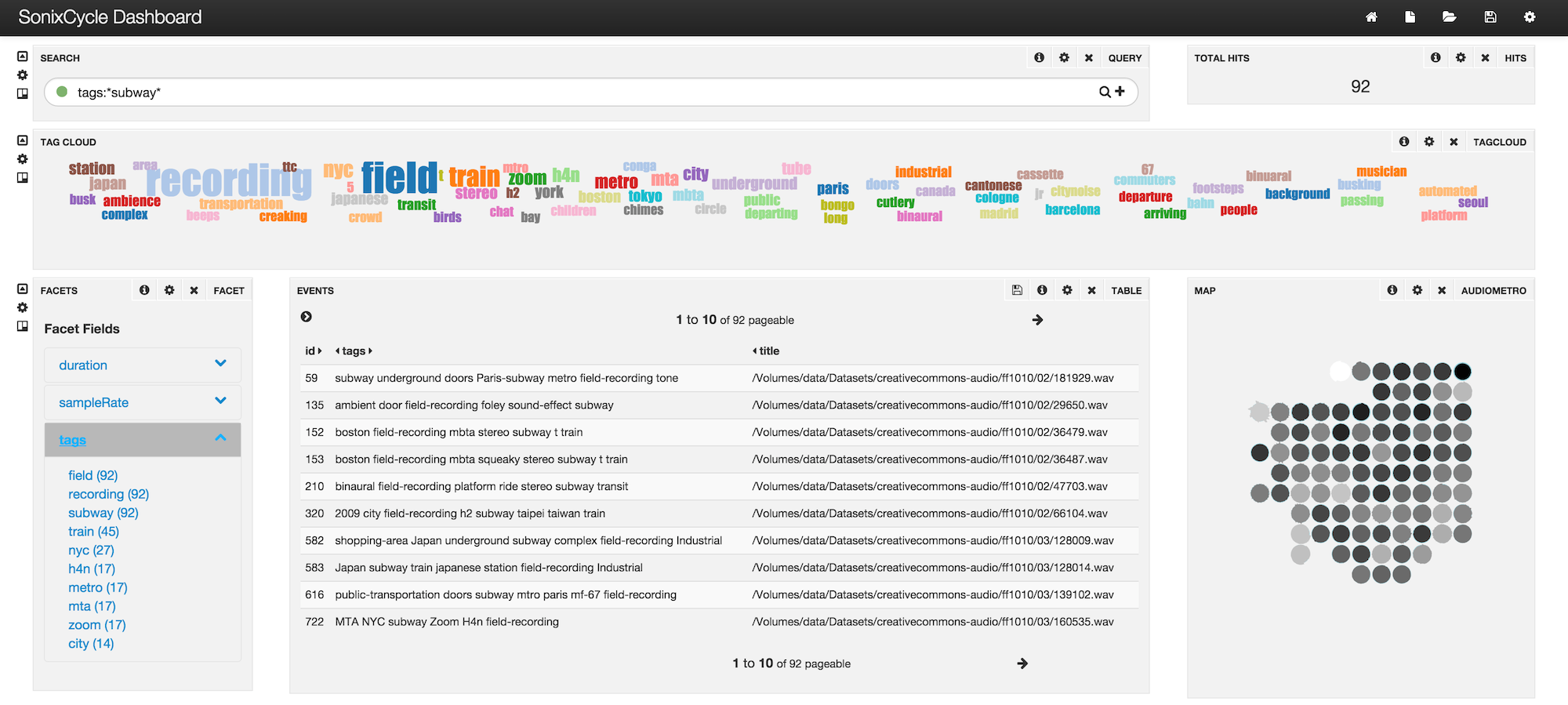

Traditional solutions rely on tags typed by a human at ingest time. This is slow, inconsistent across a team, and blind to everything that isn’t in the tag vocabulary. This R&D project — conducted at the numediart Institute of UMons in partnership with Dame Blanche SA and CETIC — aimed to complement text search with perceptual and semantic similarity, so the designer can browse the library the way it actually sounds.

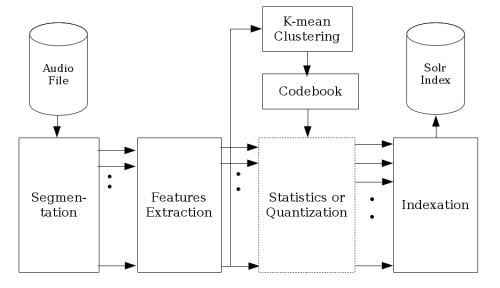

The pipeline

The core idea was to index each audio file at the level of its local perceptual content, not just its filename. For that we built a pipeline around the Apache Solr search engine:

- Segmentation. Every file is sliced into 10 ms windows; features (MFCC, timbre properties) are extracted per window.

- Feature clustering. A GPU-accelerated k-means groups features into a learned perceptual vocabulary, then a hierarchical classification layer produces the semantic facet on top.

- Custom Solr similarity. Solr’s default similarity (TF-IDF / BM25) is designed for text, not for bag-of-audio-features. We injected a cosine-distance similarity operating on the audio-feature vector space so that the same Solr front-end handles both textual and perceptual queries.

- Visualization. A t-SNE projection of the feature space lets the designer literally see regions of the library and explore adjacent sounds.

Publication & patent

The interface and the indexing workflow are protected under patent EP3430535 / WO2017158159. The research paper was published at Audio Mostly 2016:

- G. Urbain, C. Frisson, A. Moinet, T. Dutoit. A Semantic and Content-Based Search User Interface for Browsing Large Collections of Foley Sounds. ACM DL.

Going further

- Numediart Institute: host lab at UMons.

- Apache Solr: search engine the system is built on.

- t-SNE: the visualization technique used for the perceptual map.